Education is “predictably disappointing” and should never be relied upon alone to improve safety

A recent editorial in the May 2020 issue of BMJ Quality & Safety provides a noteworthy description of why education alone is a weak, low-value improvement intervention.1 The editorial examines the impact of a national education program in Australia aimed at reducing outpatient proton-pump inhibitor (PPI) prescriptions, which found no significant changes in discontinuation or dose reductions, the primary outcomes of the intervention. The authors acknowledge that educational initiatives alone are unlikely to make the inroads required to curb the prescribing of PPIs. They offer several salient reasons why educational initiatives alone fail to produce results. They first reviewed numerous studies that have found negligible or no improvements when examining the impact of education on practitioners’ behavior and clinical outcomes. Despite healthcare’s over-reliance on this low-value intervention, the authors conclude that education is “predictably disappointing among improvement efforts,” earning it a “necessary but insufficient” status among improvement interventions.1

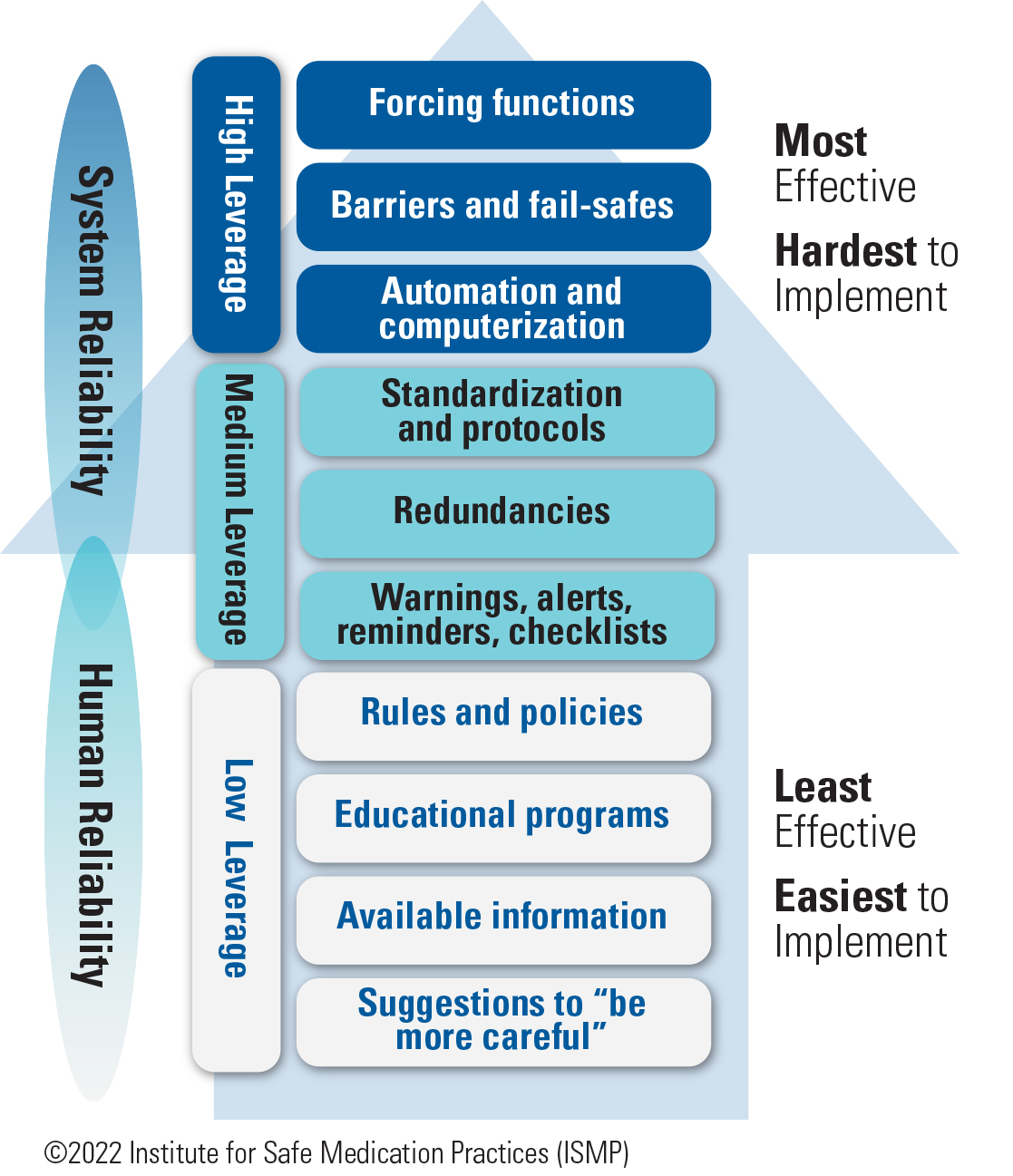

ISMP agrees that education alone is a weak improvement strategy. Education has its place as a basic prerequisite—it provides healthcare practitioners with the required knowledge (what they know) needed to develop the skills (applying that knowledge) to do their job well. For example, education about new medications, devices, automation, processes, and known risks is fundamental to forming a well-qualified complement of practitioners to manage medication safety. But while knowledge and skills are a necessary first step, education ranks among the least effective interventions in ISMP’s hierarchy of effectiveness of risk-reduction strategies (Figure 1), right below rules and policies, and far below more effective system-focused strategies such as forcing functions, barriers and fail-safes, and automation. ISMP has long noted that improvement strategies with the greatest impact on patient safety and the ability to sustain improvement are those that make it hard for practitioners to do their job wrong, and easy for them to do it right. Education never achieves this goal and is a weak improvement strategy when used alone for the following reasons.

Education relies heavily on human memory and vigilance

When a risk or error is linked to a knowledge deficit, education may be required to provide practitioners with the requisite knowledge they need to do their job. However, application of that new knowledge is heavily dependent on human memory and vigilance. Education does not guarantee that the new information has been learned, will be correctly applied in the right circumstances, and will lead to the desired skills. Additionally, knowledge and skills may erode over time, especially if they are not needed or not reinforced through routine activities.

There are several human factors explanations regarding why individuals may forget new information or fail to apply new knowledge correctly.

-

Distractions or lack of attention during the educational process may mean that the new knowledge was never encoded properly in the memory, so it was never really “learned.” 2,3

-

Everyone learns differently and at a different pace; they differ in their natural, habitual, and preferred ways of absorbing, processing, and retaining new information and skills.4 Thus, the methods and the duration of the educational experience may not be optimal for learning for all practitioners.

-

New knowledge and skills fade over time, particularly if they have not been repeatedly applied, so individuals may simply forget what they have learned by the time it is needed.2,3 Thus, rarely used knowledge or rarely performed activities require just-in-time education, rather than a once-and-done educational program.

-

New information may conflict with old knowledge, resulting in competition and hampering the ability to learn.4

-

The absence of appropriate retrieval cues may contribute to forgetting what has been learned.2,3 Access to knowledge and our intention to act on that knowledge depends on appropriate memory cues that trigger retrieval of what we have learned—without them, we may fail to remember and apply the new knowledge in an appropriate situation.

Education does not solve memory slips or lapses

Education does little when used to address human error unless a knowledge deficit is uncovered. For example, a mental slip or lapse that leads to forgetting one step, such as verifying patient allergies1 or a medication expiration date, of a complex medication use process is different from a mistake caused by a lack of knowledge. Human factors experts firmly agree that the unpredictable, transitory mental states that lead to human errors, such as forgetfulness, preoccupation, and distractibility, will not be lessened by education.5 Nor would education lead to improvement if a physician prescribed an overdose by transposing the dosing numbers or inadvertently selecting a look-alike product from a drop-down menu. Simply educating practitioners about the importance of checking allergies and expiration dates, or about avoiding overdoses or selection of the wrong drug in drop-down menus, will achieve little or no reduction in errors.

Instead, these types of human errors require system-level interventions to ‘design out’ hazards through automation and forcing functions that prevent transposed numbers from leading to serious overdoses, drug name search requirements that avoid look-alike product names in drop-down menus, or just-in-time cues and prompts to check patient allergies or expiration dates. Education is only appropriate when a knowledge deficit leads to a human error. Even then, education should be bundled with other more effective risk-reduction strategies and not relied upon solely as an improvement strategy.

Education does not easily change habits or at-risk behaviors

Education alone may do little to change unsafe complex behaviors that have become entrenched as habits. Many hold onto the belief that education cannot only get practitioners to engage in an action, but also change their unsafe behavior. However, there is widespread agreement that information and education alone will not translate into behavioral changes.6 The reasons that individuals develop unsafe habits and behaviors are often complicated and multi-factorial, such as attitudes, beliefs, motivation, ability, perceived threats, social norms, and cultural issues. For the most part, the role of habit is underappreciated, and education will not lead to better organizational performance because individuals will soon revert to their old ways of doing things.

Likewise, addressing at-risk behaviors, particularly rule-breaking or procedural deviations, is not as easy as merely re-educating practitioners. In most cases, practitioners already know and have been trained in the procedure or rule.7 Most at-risk behaviors are caused by system failures, leading practitioners to work around them. They are rarely associated with a lack of knowledge, but rather a lack of awareness of the risk associated with the task or not following the approved process. Reliance on re-education creates an illusion of managing the risk, with limited impact on improved behaviors and performance. In both cases—with unsafe habits and at-risk behaviors—redesign of the systems that are driving and rewarding those habits and behaviors is required, as well as coaching conversations that raise the perception of risk.

Education does little to change system reliability

Education is provided in an attempt to improve human reliability, not system reliability. Reliability in humans, who are inherently fallible, is less attainable than reliability in systems, which can be designed to eliminate risk or errors, leading to transformational improvements. Education aimed at improving human reliability does little to redesign vulnerable systems. In hospitals, the misuse of insulin pens for more than one patient is a prime example of when education alone has not worked.8 In fact, education alone may set up practitioners to fail. Organizations that simply educate practitioners about how to achieve better outcomes within poorly designed systems are bound to be unsuccessful.9

Education requires frequent repetition

Education that may be needed, even for everyday common situations and activities, is difficult to achieve because practitioner turnover necessitates periodic re-delivery of the same education.1 This problem is applicable to all organizations, as new practitioners who need that information are hired regularly. However, the problem is especially apparent in teaching hospitals, where students, interns, and residents frequently rotate in and out of specialty locations and units. Additionally, periodic re-delivery of the same education may be necessary to reinforce learning since its usefulness relies heavily on human memory and vigilance.

Other influencing factors

Educating practitioners about desired behaviors may not be enough if there is external pressure to behave differently. For example, education about proper antibiotic prescribing does not make it any easier to dissuade patients who visit prescribers specifically for a prescription for antibiotics to treat a viral infection. Nor does education to avoid screening men over 75 years of age for prostate cancer equip practitioners with the materials or communication techniques necessary to reassure patients interested in such screening.1 Likewise, all the education in the world about preventing overutilization of diagnostic tests is not going to change the fact that a negative x-ray is first required by insurers before magnetic resonance imaging (MRI) can be ordered.

Conclusion

Selecting the best improvement strategy is not easy. Too often, an educational intervention is chosen without first determining if a plausible lack of knowledge is the main cause. Even then, solving the problem on the basis of education alone is seldom successful.1 Beer et al. refer to healthcare’s reliance on education as the “great training robbery,” noting that systems spend large amounts of money and time on employee education without a good return on their investment.10

While education has been healthcare’s single go-to response to a quality or safety problem in the past, it is time for a new, more effective approach. A single strategy, particularly one as weak as education, is not enough to change behaviors and prevent errors. Instead, numerous high-leverage risk-reduction strategies that improve system reliability (Figure 1) must be layered together, on top of education, to create a more robust safety system. This is important for organizations in their quest to attain highly reliable outcomes. A table of key safety strategies for improvement, including examples with high-alert medications, can be found here.11

References

- Soong C, Shojania KG. Education as a low-value improvement intervention: often necessary but rarely sufficient. BMJ Qual Saf. 2020;29(5):353-7.

- Klimesch W. The structure of long-term memory: a connectivity model of semantic processing. New York, NY: Psychology Press; 2013.

- Weiten W. Psychology: themes and variations. 10th ed. Boston, MA: Cengage Learning; 2017.

- Hatami S. Learning styles. ELT Journal. 2013;67(4):488-90.

- Reason J. Human error. New York, NY: Cambridge University Press; 2003.

- Vogus TJ, Hilligoss B. The underappreciated role of habit in highly reliable healthcare. BMJ Qual Saf. 2016;25(3):141-6.

- Outcome Engenuity. Just culture training for healthcare managers. Eden Prairie, MN: Outcome Engenuity; 2018.

- ISMP. A crack in our best armor: “wrong patient” insulin pen injections alarmingly frequent even with barcode scanning. ISMP Medication Safety Alert! 2014;19(21):1-5.

- Haskins J. 20 years of patient safety. Association of American Medical Colleges (AAMC) News. June 6, 2019.

- Beer M, Finnström M, Schrader D. Why leadership training fails—and what to do about it. Harvard Business Review. 2016;50-7.

- ISMP. Your high-alert medication list—relatively useless without associated risk-reduction strategies. ISMP Medication Safety Alert! 2013;18(7):1-5.

Access this Free Resource

You must be logged in to view and download this document.