Using Information From External Errors to Signal a “Clear and Present Danger”

Chances are you’ve scanned the headlines and read many of the stories about medication errors published in the ISMP Medication Safety Alert!, particularly the tragic errors. Just a few examples of the tragic errors we’ve published in the past few years include:

- The death of a 2-year-old boy who placed a used fentaNYL patch in his mouth after he ran over it with his toy truck in his great grandmother’s room at a long-term care facility

- A 4-year-old boy who died after he was given oral chloral hydrate before a procedure and was strapped onto a papoose board without proper positioning of his head to protect his airway

- An unventilated trauma patient who died after receiving intravenous (IV) vecuronium when a physician mistakenly entered an order for the paralyzing agent into the wrong patient’s electronic record

- A 65-year-old woman who died in the emergency department after receiving IV rocuronium instead of fosphenytoin

- A 43-year-old woman who died because of an accidental overdose of fluorouracil that was administered over 4 hours instead of 4 days

- The death of a 12-year-old child with congenital long QT syndrome after a physician unknowingly prescribed a medication that prolongs the QT interval and increases the risk of torsades de pointes

You’ve also likely read about recurring fatal errors that continue despite repeated descriptions of these events in our newsletters, often in our Worth Repeating feature, and recommendations to prevent these and other repetitive types of errors highlighted in the ISMP Targeted Medication Safety Best Practices for Hospitals. For example, in 2015, we wrote about:

- Yet another patient, this time a 51-year-old hospitalized man, who died from an anaphylactic reaction largely because EPINEPHrine was not available, and the nurse felt she could not act without an order or protocol to administer the drug

- Yet another patient, an elderly man, who died after he decided to double his weekly oral methotrexate dose to treat worsening rheumatoid arthritis symptoms, without knowing the consequences of increasing the dose

As you’ve read about these tragic medication errors, you’ve probably felt surprised, saddened, anxious, unsettled, and perhaps even a little angry or frustrated, as we often feel at ISMP when these errors continue to harm patients. These initial gut feelings cause you to feel leery about errors, even if you can’t put your finger on the exact cause of your uneasiness.1 Unfortunately, we have a tendency to gloss over these initial gut feelings and treat many errors as inconsequential in our own lives and work.1 Thus, the tragic medication errors you hear about may be compelling, but are perhaps felt to be irrelevant to your practice—a sad story, but not something that could happen to you or at your practice site. People tend to “normalize” the errors that have led to tragic events, and subsequently, they have difficulty learning from them.

Biases that make it difficult to learn from others’ mistakes

There are several attribution biases that lead to normalization of errors and thwart our learning from mistakes, particularly the mistakes of others. Attribution biases refer to the way we evaluate or try to find reasons for our own behavior and others’ behavior. Unfortunately, these attributions do not always mirror reality.

First, we tend to attribute good outcomes to skill and bad outcomes to sheer bad luck—a bias called self-serving attribution.2 We have a relatively fragile sense of self-esteem and a tendency to protect our professional self-image (and the image of our workplace) by believing the same errors we read about could not happen to us or in our own organization. It was just terrible luck that led to the bad outcome in another organization, soon to be forgotten by all except the few who were most intimately involved in the event.

Next, we tend to quickly attribute the behavior of others to their personal disposition and personality, while overlooking the significant influence of external situational factors. This is called fundamental attribution bias. However, we tend to explain our own behavior in light of external situations, often undervaluing any personal explanations. This is known as the actor-observer bias. When we overestimate the role of personal factors and overlook the impact of external conditions or situations in others’ behavior, it becomes difficult to learn from their mistakes because we chalk them up to being caused by internal, personal flaws that don’t exist in us.3 This tendency to ascribe culpability to individual flaws increases as the outcome becomes more severe—a bias called defensive attribution—making it especially hard to learn from fatal events.

Finally, we tend to be too optimistic and overconfident in our abilities and systems,2 particularly when assessing our vulnerability to potentially serious or fatal events. We thirst for agreement with our expectations that the tragic errors we read about could not happen in our workplace, seeking confirmation about our expectations of safety while avoiding any evidence of serious risk.1,2 We may even go through the motions of looking at our abilities and systems to determine if similar errors might happen in our organizations, but in the end, we tend to overlook any evidence that may suggest trouble (much like confirmation bias in which we see what we expect to see on a medication label, failing to see any disconfirming evidence). We subconsciously reach the conclusions we want to draw when it comes to assessing whether our patients are safe.2

Seeking outside knowledge

Experience has shown that a medication error reported in one organization is also likely to occur in another, given enough time. Much knowledge can be gained when organizations look outside themselves to learn from the experiences of others. Unfortunately, recommendations for improvement, often made by those investigating a devastating error, go unheeded by others who feel they don’t apply to their organization. Still others have committees that are working on tough issues and doing their best, but they may only have an internal focus. Real knowledge about medication error prevention will not come from a committee with only an internal focus. A system cannot understand itself, regardless of the number and quality of investigations and root cause analyses conducted on internal errors. Quality guru Dr. W. Edwards Deming summarized this phenomenon by noting that organizations with an internal focus “may learn a lot about ice, yet know very little about water.”4

This concept is applicable to learning from your internal errors, too. Seeking outside knowledge from the literature or experts in the field when reviewing your own errors can open your eyes to vulnerabilities that are hard to see within the processes you have built or work in every day. Even including staff from a different department when reviewing errors can be an eye-opening experience. Knowledge from the outside is necessary and provides us with a lens to examine what we are doing, suggestions for what we might do differently, and a roadmap for improvement.5

External resources also provide us with a wealth of information that can be used to make the safest decisions when providing patient care. When we stand at a fork in the road and are unsure where each one leads, it would be foolish to choose whichever “seems” best without looking at information easily within our reach from others who have already traveled each road.

The Centers for Medicare & Medicaid Services (CMS) agrees. In its Interpretive Guidelines for Hospitals (§482.25 [a]), Condition of Participation: Pharmaceutical Services, CMS requires hospitals to take steps to prevent, identify, and minimize medication errors, and for hospital pharmacies to “be aware of external alerts to real or potential pharmacy-related problems in hospitals.”

Overcoming biases to allow learning

To best promote patient safety, it is crucial to seek out information about external errors, to hold on to your initial feelings of surprise and uncertainty when you read about these errors, and to resist the temptation to gloss over what happened or attribute the problem to an individual different than you.1 It is in the brief interval between the initial unease when reading about an external error and the normalization of error—convincing yourself that it couldn’t happen to you—that significant learning can occur. For that reason, ISMP highly recommends sharing stories of external errors with staff, such as those published in the ISMP Medication Safety Alert! and summarized each quarter in the ISMP Quarterly Action Agenda.

The ISMP Quarterly Action Agenda was initiated 19 years ago for the purpose of encouraging organizations to use information about safety problems and errors that have happened in other organizations to prevent similar problems or errors in their practice sites. The Agenda is prepared for an interdisciplinary committee to stimulate discussion and action to reduce the risk of medication errors. Each item in the Agenda includes a brief description of the medication safety problem, a few recommendations to reduce the risk of errors, and the issue number to locate additional information. The Agenda is also available in a Microsoft Word format that allows organizational documentation of an assessment, actions required, and assignments for each agenda item. The latest Quarterly Action Agenda was published in the January 26, 2017, newsletter and will continue to be published every quarter in the January, April, July, and October issues.

The ISMP Quarterly Action Agenda was initiated 19 years ago for the purpose of encouraging organizations to use information about safety problems and errors that have happened in other organizations to prevent similar problems or errors in their practice sites. The Agenda is prepared for an interdisciplinary committee to stimulate discussion and action to reduce the risk of medication errors. Each item in the Agenda includes a brief description of the medication safety problem, a few recommendations to reduce the risk of errors, and the issue number to locate additional information. The Agenda is also available in a Microsoft Word format that allows organizational documentation of an assessment, actions required, and assignments for each agenda item. The latest Quarterly Action Agenda was published in the January 26, 2017, newsletter and will continue to be published every quarter in the January, April, July, and October issues.

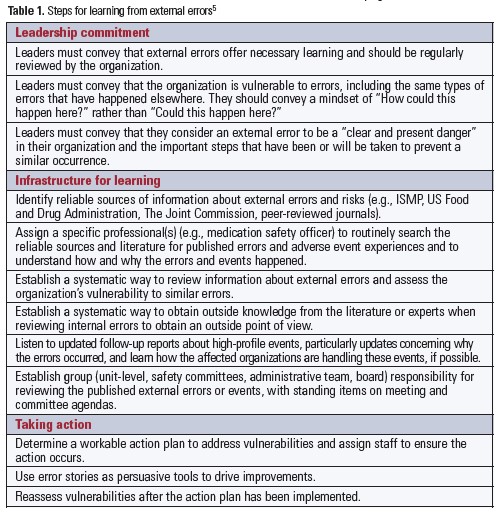

Additional steps organizations should take to establish a system for ongoing risk identification and learning from external errors can be found in Table 1.

Conclusion

The only way to make significant safety improvements is to challenge the status quo, inspire and encourage all staff to track down “bad news” about errors and risk— both internal and external—and to learn from the “bad news” so that targeted improvements can be made. We need to shatter the assumption that systems are safe until proven dangerous by a tragic event. No news is not good news when it comes to patient safety. Each organization needs to accurately assess how susceptible its systems are to the same errors that have happened in other organizations and acknowledge that the absence of similar errors is not evidence of safety. Personal experience is a powerful teacher, but the price is too high if we only learn from firsthand experiences. Learning from the mistakes of others is imperative.

References

- Weick KE, Sutcliffe KM. Managing the Unexpected. San Francisco, CA: Jossey-Bass; 2001.

- Montier J. The limits to learning. In: Montier J. Behavioural Investing: A Practitioner’s Guide to Applying Behavioural Finance. New York, NY: John Wiley & Sons, Inc.; 2007:65-77.

- Stangor C. Biases in attribution. In: Stangor D, Jhangiani R, Tarry H, eds. Principles of Social Psychology.1st international ed. Vancouver, BC: BC Campus OpenEd; 2011:737-81.

- Deming WE. A system of profound knowledge. In: Deming WE. The New Economics for Industry, Government, Education. Cambridge, MA: MIT Center for Advanced Engineering Services; 1994:101.

- Conway J. Could it happen here? Learning from other organization’s safety errors. Healthcare Exec. 2008;23(6):64,66-67.